- Splunk Answers

- :

- Using Splunk

- :

- Alerting

- :

- How does throttling work with real-time searches?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How does throttling work with real-time searches?

In Why are we getting excessive number of alerts?

We have an All time (real time) alert which produced 315 alerts in the first eight hours of the day.

When running the search query of the alert for these eight hours, we get six events.

I hear that throttling can solve the issue. How would it work?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Throttling will allow you to not keep sending the same alert every time it runs. So if you are sending an alert when some value exceeds a threshold as an example. If you run the alert every 5 minutes, it will alert, every time that value is over that threshold. By throttling, you can have Splunk only alert every x amount of time, such as every hour. This means say for the same host, you will only get an alert every hour if the condition still exists in an hour. Rather than every time the alert runs. You can set the time period, and the fields that need to match before it throttles.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ok, but does it apply to my case? -

We have an All time (real time) alert which produced 315 alerts in the first eight hours of the day.

When running the search query of the alert for these eight hours, we get six events.

We have barely six events that satisfy the criteria.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @danielbb

Have a look at this answer by @linu1988 and try these changes to throttle alert as per required suppress time.

https://answers.splunk.com/answers/409031/why-does-my-real-time-alert-continue-to-send-email.html

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

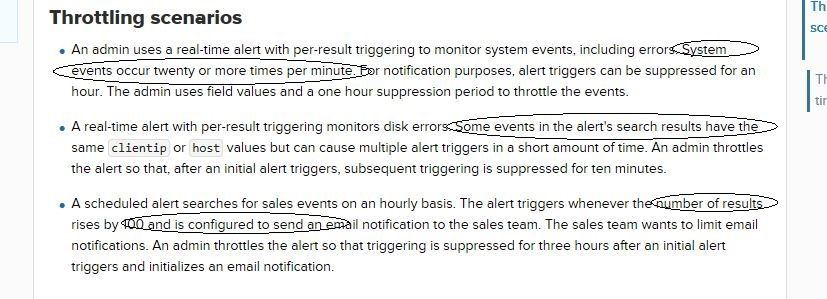

Look please at the scenarios from Throttle configuration and scenarios

As far as I understand, throttling is the process of consolidating multiple events into one alert, which isn't my case.