- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How do I use a regular expression to extract all 2...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How do I use a regular expression to extract all 22 entries of Message field with

left boundry = "Messages": [

right boundry = ],

Especially I need following extracted for some of the message fields...

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 1.",

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 2."

Message:{

"Activity History": [

{

"SequenceNumber": 1,

"Action": "Agent Id > asdf",

"Context": "Home",

"Messages": [],

"TimeStamp": "2017-02-07T13:30:07"

},

{

"SequenceNumber": 2,

"Action": "Initiate_Issuance > xasdf",

"Context": "Home",

"Messages": [],

"TimeStamp": "2017-02-07T13:30:07"

},

{

"SequenceNumber": 3,

"Action": "Create Easy Quote",

"Context": "Create Quote",

"Messages": [],

"TimeStamp": "2017-02-07T13:30:51"

},

{

"SequenceNumber": 4,

"Action": "Adding a driver from prefill",

"Context": "Create Quote>Prefill",

"Messages": [],

"TimeStamp": "2017-02-07T13:31:31"

},

{

"SequenceNumber": 5,

"Action": "Adding a driver from prefill",

"Context": "Create Quote>Prefill",

"Messages": [],

"TimeStamp": "2017-02-07T13:31:31"

},

{

"SequenceNumber": 6,

"Action": "Adding a vehicle from prefill",

"Context": "Contact",

"Messages": [],

"TimeStamp": "2017-02-07T13:31:34"

},

{

"SequenceNumber": 7,

"Action": "Adding a vehicle from prefill",

"Context": "Contact",

"Messages": [],

"TimeStamp": "2017-02-07T13:31:34"

},

{

"SequenceNumber": 8,

"Action": "residence-info",

"Context": "Contact",

"Messages": [],

"TimeStamp": "2017-02-07T13:32:02"

},

{

"SequenceNumber": 9,

"Action": "Validate Vehicle Information",

"Context": "Vehicles",

"Messages": [],

"TimeStamp": "2017-02-07T13:33:25"

},

{

"SequenceNumber": 10,

"Action": "Save for VIN",

"Context": "",

"Messages": [],

"TimeStamp": "2017-02-07T13:33:25"

},

{

"SequenceNumber": 11,

"Action": "Validate Driver Information",

"Context": "Drivers",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:02"

},

{

"SequenceNumber": 12,

"Action": "Order CR",

"Context": "Reports",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:03"

},

{

"SequenceNumber": 13,

"Action": "crossindices",

"Context": "Reports",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:05"

},

{

"SequenceNumber": 14,

"Action": "crossindices",

"Context": "Reports",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:05"

},

{

"SequenceNumber": 15,

"Action": "Order LIS",

"Context": "Reports",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:09"

},

{

"SequenceNumber": 16,

"Action": "Fetch CR",

"Context": "Reports",

"Messages": [],

"TimeStamp": "2017-02-07T13:35:14"

},

{

"SequenceNumber": 17,

"Action": "rates",

"Context": "Reports",

"Messages": [

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 1.",

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 2."

],

"TimeStamp": "2017-02-07T13:35:48"

},

{

"SequenceNumber": 18,

"Action": "package-rates",

"Context": "Reports",

"Messages": [

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 1.",

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 2."

],

"TimeStamp": "2017-02-07T13:35:49"

},

{

"SequenceNumber": 19,

"Action": "Update Premium > rates",

"Context": "Coverages",

"Messages": [

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 1.",

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 2."

],

"TimeStamp": "2017-02-07T13:37:18"

},

{

"SequenceNumber": 20,

"Action": "Policy Package > package-rates",

"Context": "Coverages",

"Messages": [

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 1.",

"Transportation Expenses: Collision or Comprehensive coverage is required for vehicle 2."

],

"TimeStamp": "2017-02-07T13:37:29"

},

{

"SequenceNumber": 21,

"Action": "Print Documents",

"Context": "Coverages",

"Messages": [],

"TimeStamp": "2017-02-07T13:38:04"

},

{

"SequenceNumber": 22,

"Action": "Save Popup>Save & Exit",

"Context": "Coverages",

"Messages": [],

"TimeStamp": "2017-02-07T13:38:29"

}

]

}

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

You could do a bit of config here to probably get you where you want to be. I had a go with your sample data.

Assuming that your data is being written to a file, in my example, inputs.conf looks like this:

[monitor:///path-to-folder-where-file-is]

crcSalt = <SOURCE>

host =

index = json-test

sourcetype = not-json

If your is coming in via a different input, it shouldn't be a problem. In the example above I'm just setting the sourcetype to 'not-son', so that in props.conf I can do:

[not-json]

TRUNCATE = 0

SHOULD_LINEMERGE = false

LINE_BREAKER = (},)

MAX_EVENTS = 100000

TIME_PREFIX = "TimeStamp":

TIME_FORMAT = %Y-%m-%dT%H:%M:%S

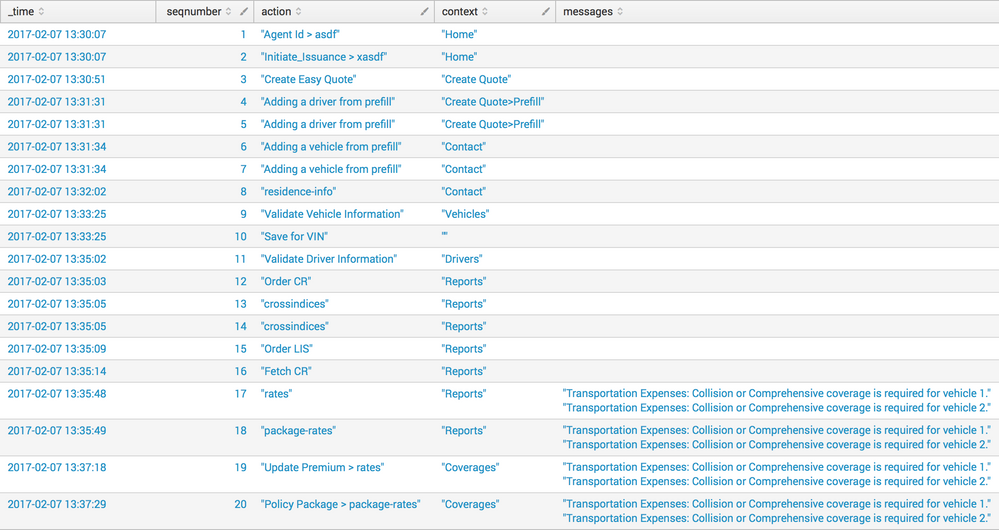

This means that the different SequenceNumber blocks will appear as individual events, with the timestamp from that block:

You could then add some of the extractions to your props.conf

EXTRACT-seqnumber = "SequenceNumber": (?<seqnumber>[^,]+),

EXTRACT-action = "Action": (?<action>[^,]+),

EXTRACT-context = "Context": (?<context>[^,]+),

EXTRACT-messages = "Messages": \[[\n\r]+(?<messages>[^]]+)\],

Which would then allow you to do searches like:

index=json-test

| table _time seqnumber action context messages

| makemv delim="," messages

| sort +seqnumber

Giving you a table such as:

I'm not 100% if that exactly what you're looking for. But it might get you closer, or just give you a few other things to try.

Oh, and if you want to strip the quotes from the values in the fields later, you could use something like rex in sed mode:

| rex mode=sed field=context "s/\"([^\"]*)\"/\1/g"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

You could do a bit of config here to probably get you where you want to be. I had a go with your sample data.

Assuming that your data is being written to a file, in my example, inputs.conf looks like this:

[monitor:///path-to-folder-where-file-is]

crcSalt = <SOURCE>

host =

index = json-test

sourcetype = not-json

If your is coming in via a different input, it shouldn't be a problem. In the example above I'm just setting the sourcetype to 'not-son', so that in props.conf I can do:

[not-json]

TRUNCATE = 0

SHOULD_LINEMERGE = false

LINE_BREAKER = (},)

MAX_EVENTS = 100000

TIME_PREFIX = "TimeStamp":

TIME_FORMAT = %Y-%m-%dT%H:%M:%S

This means that the different SequenceNumber blocks will appear as individual events, with the timestamp from that block:

You could then add some of the extractions to your props.conf

EXTRACT-seqnumber = "SequenceNumber": (?<seqnumber>[^,]+),

EXTRACT-action = "Action": (?<action>[^,]+),

EXTRACT-context = "Context": (?<context>[^,]+),

EXTRACT-messages = "Messages": \[[\n\r]+(?<messages>[^]]+)\],

Which would then allow you to do searches like:

index=json-test

| table _time seqnumber action context messages

| makemv delim="," messages

| sort +seqnumber

Giving you a table such as:

I'm not 100% if that exactly what you're looking for. But it might get you closer, or just give you a few other things to try.

Oh, and if you want to strip the quotes from the values in the fields later, you could use something like rex in sed mode:

| rex mode=sed field=context "s/\"([^\"]*)\"/\1/g"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Because it is JSON, just do this:

[your_sourcetype]

INDEXED_EXTRACTIONS = json

KV_MODE = none

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you have ONLY the JSON string, it should work from what it looks like, but the fact that it is embedded in the regular text, it can't parse it like JSON. Remove the "Message:" from the front of the event and it could be parsed properly as JSON.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oh sorry, I just edited above comment. it's not a true json, Its actually the json data embedded in regular text data. We tried using following but it does not provide all the values...

...... | rex max_match=100 "(?{[^}]+})" | mvexpand activity_history | spath input=activity_history

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is your raw data in Splunk a true json or json data embedded in regular text data?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, it's not a true json, Its actually the json data embedded in regular text data. We tried using following but it does not provide all the values...

| rex max_match=100 "(?{[^}]+})" | mvexpand activity_history | spath input=activity_history